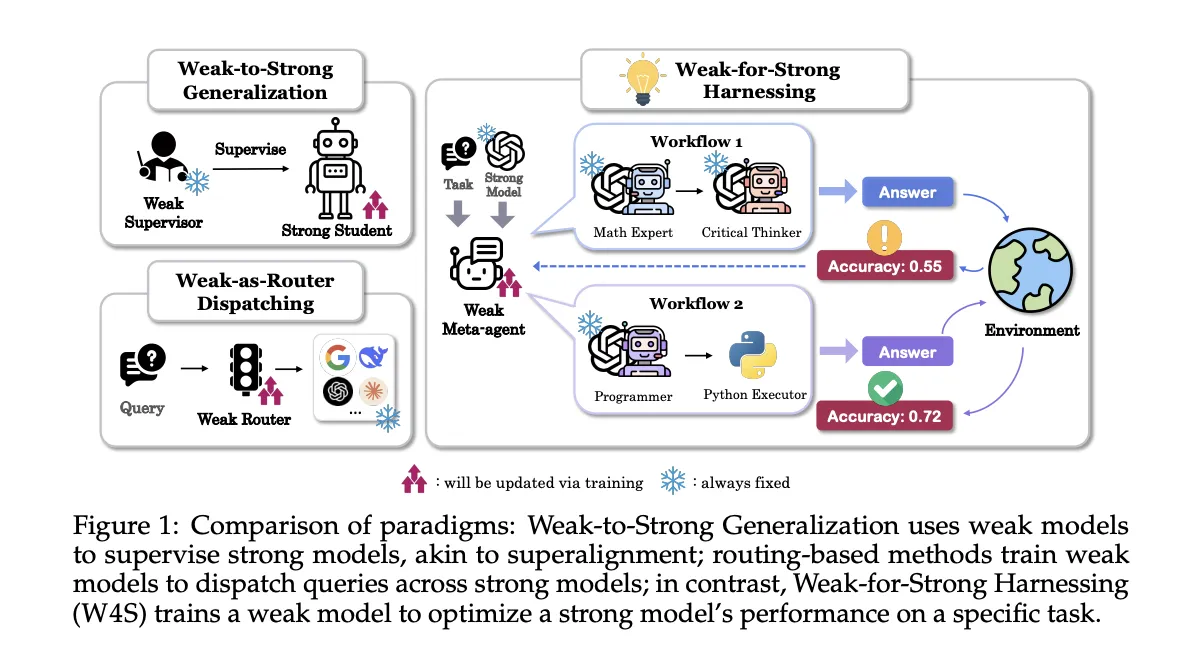

Researchers from Stanford, EPFL, and UNC introduce Weak-for-Strong Harnessing, W4S, a new Reinforcement Learning RL framework that trains a small meta-agent to design and refine code workflows that call a stronger executor model. The meta-agent does not fine tune the strong model, it learns to orchestrate it. W4S formalizes workflow design as a multi turn Markov decision process, and trains the meta-agent with a method called Reinforcement Learning for Agentic Workflow Optimization, RLAO. The research team reports consistent gains across 11 benchmarks with a 7B meta-agent trained for about 1 GPU hour.

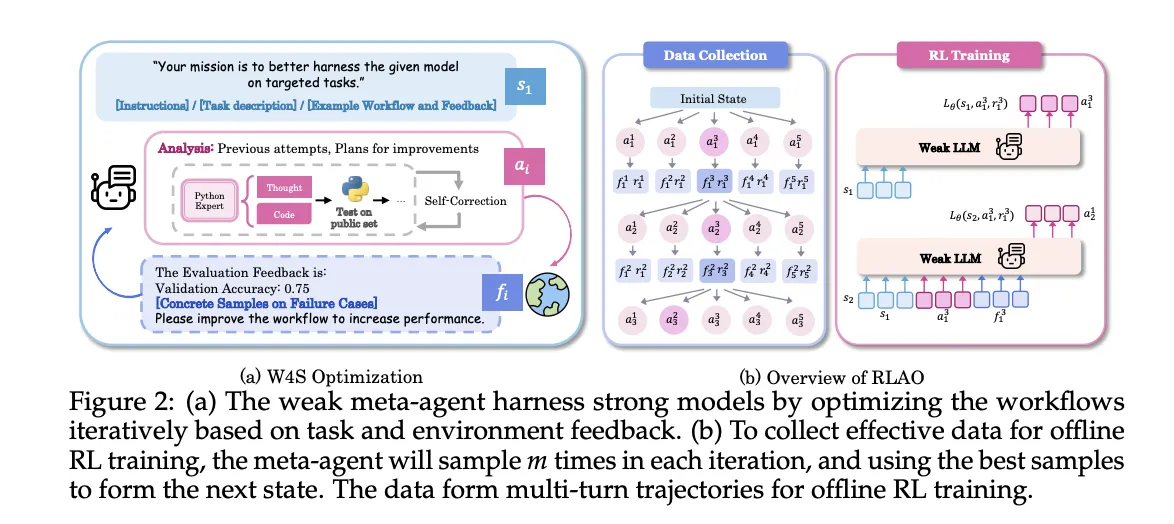

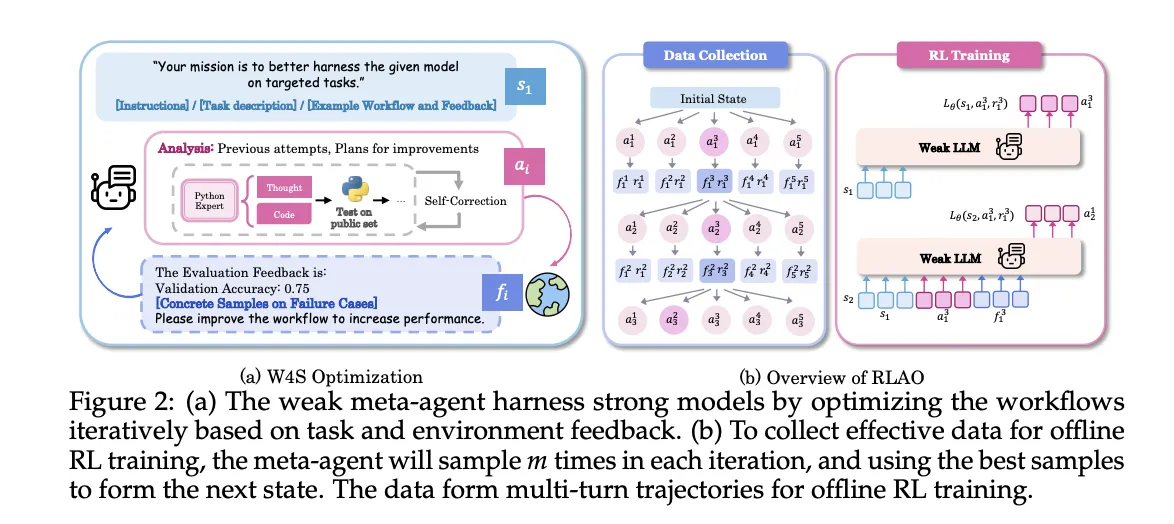

W4S operates in turns. The state contains task instructions, the current workflow program, and feedback from prior executions. An action has 2 components, an analysis of what to change, and new Python workflow code that implements those changes. The environment executes the code on validation items, returns accuracy and failure cases, and provides a new state for the next turn. The meta-agent can run a quick self check on one sample, if errors arise it attempts up to 3 repairs, if errors persist the action is skipped. This loop gives learning signal without touching the weights of the strong executor.

W4S runs as an iterative loop

- Workflow generation: The weak meta agent writes a new workflow that leverages the strong model, expressed as executable Python code.

- Execution and feedback: The strong model executes the workflow on validation samples, then returns accuracy and error cases as feedback.

- Refinement: The meta agent uses the feedback to update the analysis and the workflow, then repeats the loop.

Reinforcement Learning for Agentic Workflow Optimization (RLAO)

RLAO is an offline reinforcement learning procedure over multi turn trajectories. At each iteration, the system samples multiple candidate actions, keeps the best performing action to advance the state, and stores the others for training. The policy is optimized with reward weighted regression. The reward is sparse and compares current validation accuracy to history, a higher weight is given when the new result beats the previous best, a smaller weight is given when it beats the last iteration. This objective favors steady progress while controlling exploration cost.

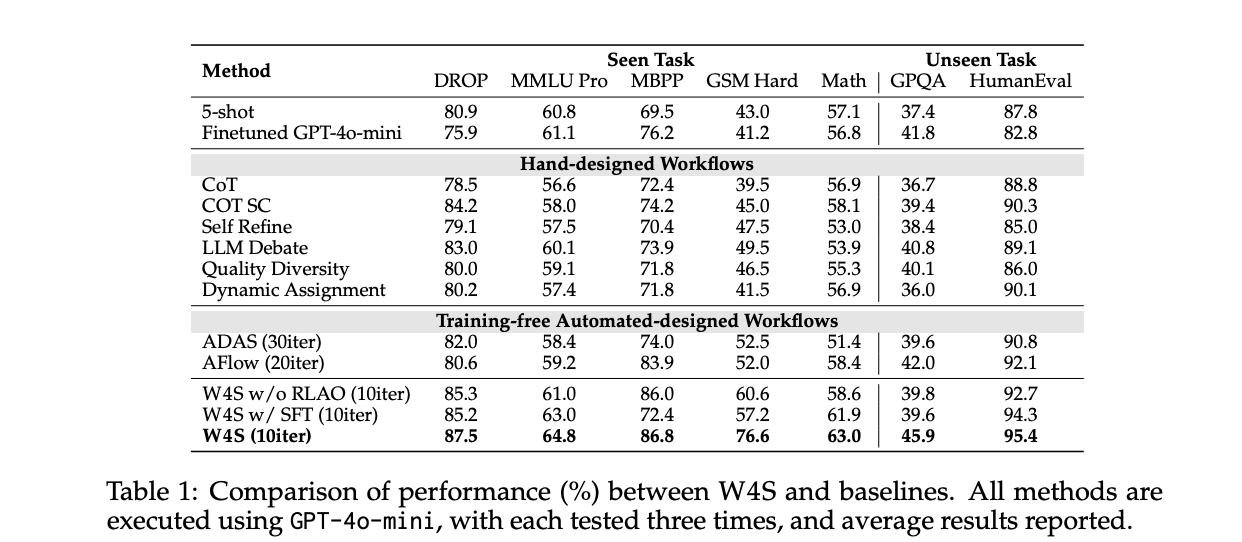

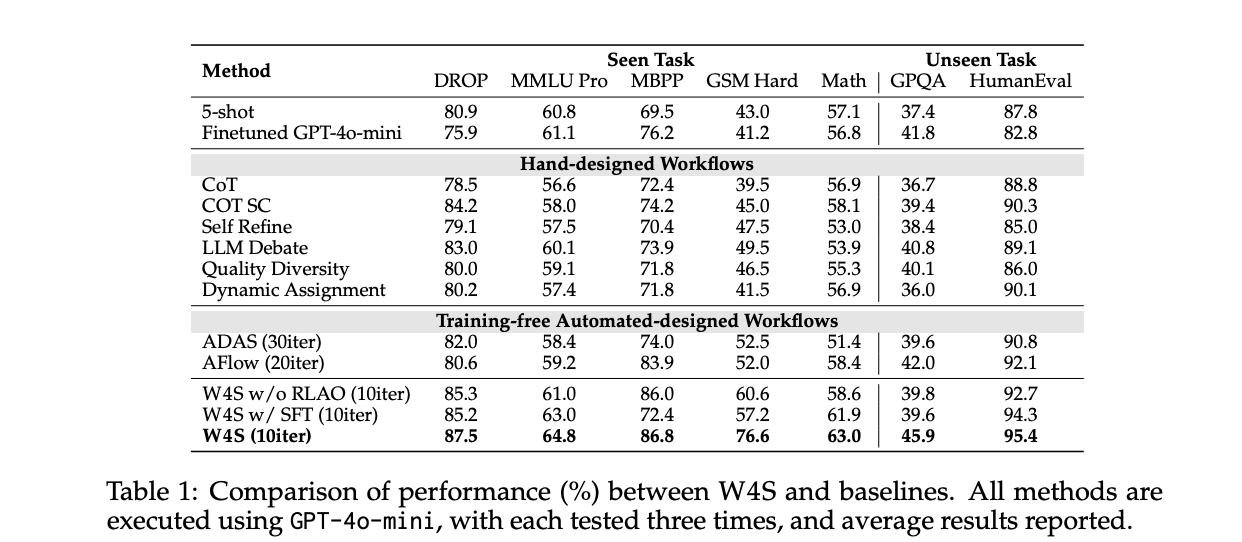

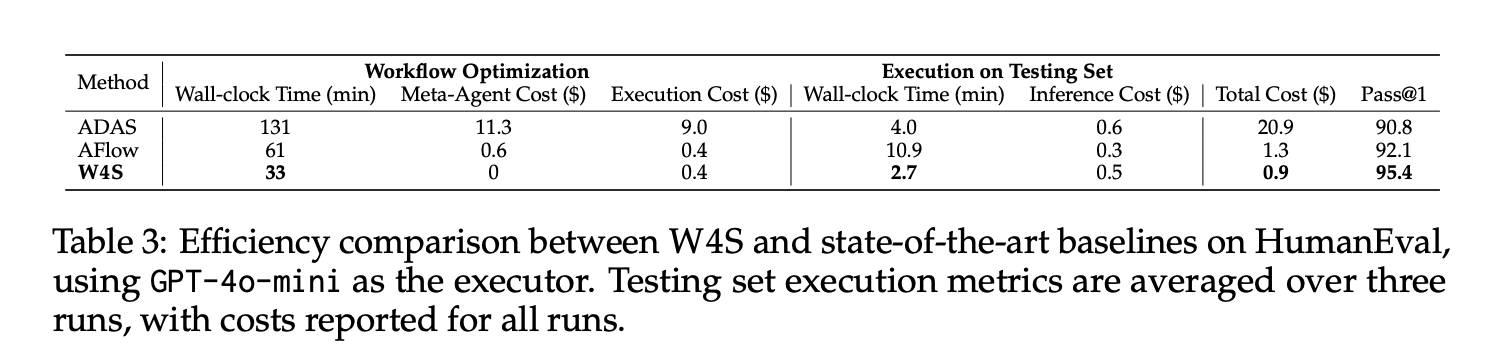

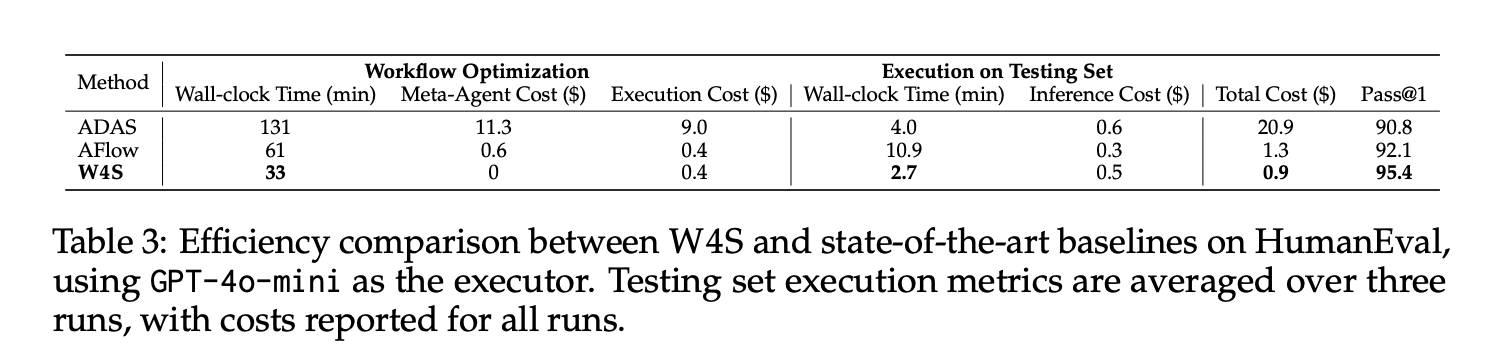

Understanding the Results

On HumanEval with GPT-4o-mini as executor, W4S achieves Pass@1 of 95.4, with about 33 minutes of workflow optimization, zero meta-agent API cost, an optimization execution cost of about 0.4 dollars, and about 2.7 minutes to execute the test set at about 0.5 dollars, for a total of about 0.9 dollars. Under the same executor, AFlow and ADAS trail this number. The reported average gains against the strongest automated baseline range from 2.9% to 24.6% across 11 benchmarks.

On math transfer, the meta-agent is trained on GSM Plus and MGSM with GPT-3.5-Turbo as executor, then evaluated on GSM8K, GSM Hard, and SVAMP. The paper reports 86.5 on GSM8K and 61.8 on GSM Hard, both above automated baselines. This indicates that the learned orchestration transfers to related tasks without re training the executor.

Across seen tasks with GPT-4o-mini as executor, W4S surpasses training free automated methods that do not learn a planner. The study also runs ablations where the meta-agent is trained by supervised fine tuning rather than RLAO, the RLAO agent yields better accuracy under the same compute budget. The research team include a GRPO baseline on a 7B weak model for GSM Hard, W4S outperforms it under limited compute.

Iteration budgets matter. The research team sets W4S to about 10 optimization turns on main tables, while AFlow runs about 20 turns and ADAS runs about 30 turns. Despite fewer turns, W4S achieves higher accuracy. This suggests that learned planning over code, combined with validation feedback, makes the search more sample efficient.

Key Takeaways

- W4S trains a 7B weak meta agent with RLAO to write Python workflows that harness stronger executors, modeled as a multi turn MDP.

- On HumanEval with GPT 4o mini as executor, W4S reaches Pass@1 of 95.4, with about 33 minutes optimization and about 0.9 dollars total cost, beating automated baselines under the same executor.

- Across 11 benchmarks, W4S improves over the strongest baseline by 2.9% to 24.6%, while avoiding fine tuning of the strong model.

- The method runs an iterative loop, it generates a workflow, executes it on validation data, then refines it using feedback.

- ADAS and AFlow also program or search over code workflows, W4S differs by training a planner with offline reinforcement learning.

W4S targets orchestration, not model weights, and trains a 7B meta agent to program workflows that call stronger executors. W4S formalizes workflow design as a multi turn MDP and optimizes the planner with RLAO using offline trajectories and reward weighted regression. Reported results show Pass@1 of 95.4 on HumanEval with GPT 4o mini, average gains of 2.9% to 24.6% across 11 benchmarks, and about 1 GPU hour of training for the meta agent. The framing compares cleanly with ADAS and AFlow, which search agent designs or code graphs, while W4S fixes the executor and learns the planner.

Check out the Technical Paper and GitHub Repo. Feel free to check out our GitHub Page for Tutorials, Codes and Notebooks. Also, feel free to follow us on Twitter and don’t forget to join our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Michal Sutter is a data science professional with a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michal excels at transforming complex datasets into actionable insights.